Ten seconds in we have the quote: "Hue is is essentially what color the color is." There is a plant growing out of my face. Is this a tautology, or just a circular definition? I am just a bit disappointed. Just to be clear, I watched a bit more of the video, and liked it, but that sentence got stuck in my craw.

I feel obliged here to mention the launch of the ISCC/AIC Colour Literacy Project. I quote one of the objectives of the project:

To identify and address the most basic, current misconceptions and misinformation about colour, while building a bridge between art and science for 21st century colour education.

The quote from the video is problematic because it uses the word color twice, but with two different meanings. I will get into what those two meanings are in a bit. But first I want to pontificate a bit on how we think about color and how we communicate it.

What is the most salient attribute of color?

Many who teach about color start with the notion of hue. The above video from Flow Graphics starts by talking about hue. First example of color teachers leading with hue. That's Exhibit A.

Munsell's three attributes are hue, value, and chroma -- not chroma, value, and hue. That's Exhibit B. The primacy of hue was carried through in the Munsell corporation beyond Munsell's death. F. G Cooper, in Munsell Manual of Color (Munsell Company, 1929) starts out talking about hue. Exhibit C.

M. Luckiesh wrote a book on color about the same time, Color and Its Applications, Van Nostrand, 1927. Here is a quote from his chapter on terminology. Once again, hue is at the start of the list of attributes. Exhibit D.

How about modern educators on color? David Briggs has a great site with real color science stuff. Here is his webpage on the Dimensions of Colour. Note that he lists hue first in the URL, and that hue gets described first. (I should also point out that he is from Australia, so he can spell color with a u without sounding pretentious. When I spell colour with a u, it is because I intend to sound pretentious.) That was exhibit E.

Finally, I come to Exhibit F, which says pretty much what I am trying to say. Stephen Westland has a series of wonderful short videos about real color science. As with David, he is allowed to spell color with a u because he is British. Below is a screen shot from a video he has about how we describe color, which is aptly named How We Describe Colour. His quote which echoes my sentiment that the most prominent attribute of color is hue is this: "the most prominent attribute of colour is hue".

Disclaimer: I have no financial ties to either David Briggs or Stephen Westland. If, however, I happen to wander into a pub that they are in, I would likely accept if either bought me a beer. I expect that after the third or fourth beer, I might be persuaded to reciprocate.

From this, I conclude that hue is of critical importance in the description of color. Well, duh.

How do we define hue?

Simple words are the hardest to define. It's like what Satchmo said when asked to define jazz: “If you have to ask what jazz is, you'll never know.” Let's have a look at some definitions of hue.

This is from the Wikipedia entry on hue:

the degree to which a stimulus can be described as similar to or different from stimuli that are described as red, green, blue, and yellow

Attributed to Mark Fairchild, "Color Appearance Models: CIECAM02 and Beyond". Tutorial slides for IS&T/SID 12th Color Imaging Conference.

Stephen Westland has a similar definition of hue: The hue of a color is whether it is red, yellow, blue, green, etc.

Hmmm... same list of four colors. From the first one, I'm not sure if orange is one of the hues. From the second definition, it might be.

Here is David Briggs' definition: Hue refers to the circular scale of "pure" or "saturated" colours formed by the colours seen in the spectrum (red, orange, yellow, green, cyan, blue and violet), together with the non-spectral colours like magenta, seen when the two ends of the spectrum are mixed.

That clears things up a bit. But it still seems that there are a small discrete number of hues. In Briggs' case, there are eight.

But then we have Munsell's hue circle. He listed the pigments and combinations of pigments required to make ten different hues, and further subdivided them into 100 steps of hue. Here is a depiction of 20 steps of hues, based on Munsell.

By Thenoizz

I think it would be safe to say that eight distinctions of hue fits with the everyday usage of the word, but that my wife would cringe if I described the color of a blouse as "three-tenths of the way from red to red-purple". So, Munsell's 100 steps of hue are a bit beyond what we normally think. BTW, Madelaine's credentials in this subject matter are unrivalled. She is the world's leading expert in the field of my John the Math Guy's flaws. A very broad field, I might add.

With the Munsell system of 100 hues, we have crossed the line between everyday and scientific usage of the word.

My definition of hue

Before I define hue, I need to define color. I define the word color to be a sensation in the brain which is usually (but not always) initiated by light striking the retina in the back of the eye. Colors can be subdivided into two broad categories: achromatic and chromatic.

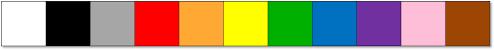

Achromatic colors are those where the brain perceives a balance between the signals from the three types of cones. Achromatic colors include black, gray, white, and all colors in between. Yes, white, and black are colors, and more specifically, achromatic colors. I don't care what your art teacher said about black being the absence of color.

Chromatic colors are all the rest. Chromatic colors can be systematically subdivided into groupings according to hue. Various hue groups and methods for determining the group of a color have been developed. In one of the simpler cases, there are eight hue groups: red, orange, yellow, green, cyan, blue, violet, and magenta. All chromatic colors belong in one of these eight groups. The boundaries of these hue groupings are not precisely defined, and the method of assigning a color such as peach or mauve or olive to it's appropriate hue group is by eye.

In a system of color developed by Albert Munsell, there are ten hue groupings: R, YR, Y, GY, G, BG, B, PB, P, and RP. Each of these ten groupings is subdivided into ten divisions. For example, the hue group for red includes 1R (which is on the purplish side), 5R (which is pure red), and 10R (which is on the orange side). Assigning a color to a hue group is still done by eye, but this is facilitated by having a color atlas with a few thousand colors to match against. Each of these colors has a hue identifier.

Then there's CIELAB. CIELAB values of a color are determined by measuring the light reflected from a surface (or emitted by a light source) and using math to translate these into various quantities, including hue angle. Hue angle is in degrees, specifying a location on the hue wheel. For most practical purposes, the resolution of hue angle could be taken to be one degree, although finer divisions are certainly measurable. A benefit to this hue system is that it can be measured, thus eliminating the subjective judgment of a person.

A quirk

Summarizing, we have identified hue as the most salient characteristic of a color (at least, of a chromatic color), and we have seen that this concept is baked into both Munsell color space and CIELAB.

Now we come to a quirk in the road.

I recall looking at a particularly colorful sunset when I was perhaps 5 years old. I looked at the gradations of color and I said to my sister (who would have been about 8) that pink is just light purple. She quickly corrected me by saying that pink is light red. I reckon that, had my sister not chastised me too harshly, and at such a young age, I would have likely become a world-renowned color scientist by third or maybe fourth grade. As it is, I didn't even think about color again until I was in my mid 30s. Let that be a warning to all older sisters who criticize their younger brothers.

I checked a few dictionaries to see how they define pink. There is a consensus that pink is somewhere between red and white, just like my sister told me. But, I have a quick test. The blocks in the image below are various hues that might be considered pink. Which one do you think is closest to pink?

Will the real pink please stand up?

My vote goes for D. If you pick something else, then it could be that your computer screen is different from mine. Or maybe the software on your computer is doing something different in rendering the colors. Or it could be that your eyes are different from mine. Or then again, maybe I'm just dumb? Ask my wife. She's the authority.

Now for the surprise. Block B is the one that is actually between red and white, at least according to RGB values. That really doesn't look like pink to me! I am going to guess that my wife would call it dusty rose.

[Comment from my wife: "It doesn't look dusty rose. It looks more like light red, but not pink." I appreciate her corrections.]

At the end of 2016, I posted a blog that delineated regions of various colors in CIELAB space. The plot below is from that blog post. The hue angle for red lies between a hue angle of 27 and 37 degrees. Pink straddles a hue of 0 degrees, -23 to +21 degrees. Pink does not have the same hue as red.

Mauveless chart of color names

These two things suggest that my sister and the common dictionary definition were wrong. Pink is not somewhere between white and red, but rather, is shifted in hue more toward purple. If I had the vocabulary when I was 5, I would have correctly said that pink is light magenta. But alas, the color name magenta was yet been invented when I was 5. The word magenta as a color name wasn't coined until around 1860.

There is a bit of a dichotomy. From the standpoint of language, pink belongs in the red hue group. On the other hand, my RGB display and CIELAB both suggest that pink and magenta belong in the same hue group.

A second quirk

Brown is a second quirk in the road.

I just asked my wife if she would be comfortable if I said that a chestnut is orange in hue. I won't share her answer exactly, but suffice it to say that once you remove her copious sarcastic jabs at me, the answer boils down to "no". Chestnuts are not orange. Since she is the authority, I'm gonna say that linguistically speaking, chestnuts are not orange, although I could conceive of a nice orange-chestnut glaze on seared scallops. Conceptually, brown is just not a shade of orange.

But Munsell would beg to differ, as shown in the complicated but very clever image below. At the right, we have a page from the The Digital Munsell, thanks to Gernot Hoffman. (And I mean that. Thanks, Gernot!) The page shown is the 5YR page, which is Munsell's quintessential yellow-red, i.e. orange. At the right we have pictures from my shopping cart with little squares showing matches to four of the the Munsell 5YR colors.

Demonstration that chestnuts are orange in hue

(Once again, thanks to Gernot Hoffman for making this possible)

This isn't just some silly notion that Munsell had during a psychedelic acid trip. According to my mauveless chart (above) showing the locations of color names in CIELAB, brown and orange occupy the same hue angle. According to that blog post, orange occupies the region between 57 and 67 degrees, while brown straddles that, going from 55 to 76 degrees. Brown is darker than orange, and less saturated, but, at least according to CIELAB and Munsell, they have basically the same hue angle.

A third quirk

I have a third quirkiness to share about how we classify the colors light blue versus dark blue. I think we can all agree that light blue and dark blue are the same hue? Linguistically, it makes sense, right? But I have a hunch and a little evidence that this might not be the case.

When I look at the rainbow below, I see dark blue to the left of light blue. If you buy into that perception, and you buy into the idea that hue is kinda equated to a position in the rainbow, then the conclusion is that light blue has a slightly greener hue than dark blue.

Linguistically, that's just silly talk. Both light blue and dark blue should have a hue of blue. But my logic says different. On the off chance that there is something wrong with my logic, I will test the hypothesis by means of the most sophisticated psychophysical method available today. I asked a few hundred of my closest friends for their judgment using SurveyMonkey.

Respondents were asked to pick the best example of light blue from this image:

And then they were asked to pick the best example of dark blue from this image:

My survey didn't show the number below each color patch. These are the HSL numbers for that color. HSL stands for hue, sauration, and lightness. These values are computed directly from the RGB values, and roughly correlate to CIELAB hue angle, chroma, and L*. The number in the first row is the hue. You can see that the colors are arranged in hue order in steps of 5.

I got 40 people to respond to the survey before SurveyMonkey told me that I needed to pay them money to get more responses. Luckily, there were enough responses for me to make a meaningful statistical judgment. Statistics tells me that the HSL hue values for light blue and dark blue are different, and are different in the direction that I predicted. The average for light blue is #5, which has a hue of 40. The average response for dark blue was #E with a hue of 155. The average of the differences between respondents answers was 16. This corresponds to about 23 degrees of CIELAB hue angle, which is practically significant. The z score of the difference was +8.12, which is very statistically significant.

I see several possibilities here:

1. The test was biased toward the middle, so naturally the answers gravitated that way. Essentially I was forcing the middle card. Poor choice on my part.

2. HSL doesn't accurately reflect the way the cones in our eyes work in terms of hue.

3. Light blue and dark blue have a different hue in terms of our perception, which happens at a higher level, that is, somewhere in that tangled mess of neurons.

I honestly think that any of these could be the explanation for my very scientific SurveyMonkey experiment. It's likely that all of them come into play, but I don't know which effect is the largest. On the other hand, these results do not disprove my thesis.

[Subtle point: There are multiple weak points in this survey, not the least of which are the facts that the computer monitors that people used were likely not calibrated, and that the response of people's eyes are somewhat different. But these two factors are mitigated by the fact that I looked at the difference of the two hue values. If a given monitor displays light blue a little funky, then (maybe) it will display dark blue in a similar funky manner.]

What gives?

I discussed three quirks in our perception of hue.

1. Pink and red do not have the same hue. Pink and magenta do.

2. Brown and orange have the same hue. Brown is dark orange.

3. Light blue and dark blue perhaps have a different hue.

It took me a while to puzzle this through, but I think I got it. Here are the basic colors: white, black, gray, red, orange, yellow, green, blue, purple, pink, and brown.

There are two other colors that are eager to join the list of basic colors. Quoting a previous post of mine: "Some languages (namely Japanese, Russian, and Italian) have further broken the blue category into sky blue and navy blue."

When presented with a chromatic color, we subconsciously categorize it. What buckets does the subconscious have for this categorization? The eleven basic colors. Hence the confusion. While we would like to think that the brain has this neat and tidy scheme for classifying colors according to the scientific notion of hue angle, the brain actually uses the basic colors as the buckets. When my eye sees a color close to brown, the brain classifies it as brown, rather than "a color with the same hue as orange".

The fact that most of the chromatic basic colors are also rainbow colors just confuses those of us who try to tease out how the brain works.